|

Data Repository for

Haptic Algorithm Evaluation

|

|

Emanuele Ruffaldi1, Dan Morris2, Timothy

Edmunds3, Dinesh Pai3, and Federico

Barbagli2

1PERCRO, Scuola Superiore S. Anna

2Computer Science Department, Stanford University

3Computer Science Department, Rutgers University

pit@sssup.it, {dmorris,barbagli}@robotics.stanford.edu,

{tedmunds,dpai}@cs.rutgers.edu

[overview]

[data pipeline]

[data formats]

[data sets]

[code]

[example analyses]

[user contributions]

[contact]

Overview

This page hosts the public data repository described in our 2006

Haptics Symposium paper: Standardized Evaluation of Haptic

Rendering Systems.

The goal of this repository is threefold:

- To provide publicly-available data sets that describe the forces resulting

when a probe is scanned over the surface of several physical objects, along

with models of those objects. This data can serve as a "gold standard" for

haptic rendering algorithms, providing the "correct" stream of forces that

should result from a particular scanning trajectory.

- To provide a standard set of analyses that can be performed on particular

implementations of haptic rendering algorithms, allowing objective

and standardized evaluations of those algorithms.

- To present the results of these analyses collected from several

publicly-available implementations of haptic rendering algorithms, as

reference data and as examples of potential applications of these data sets.

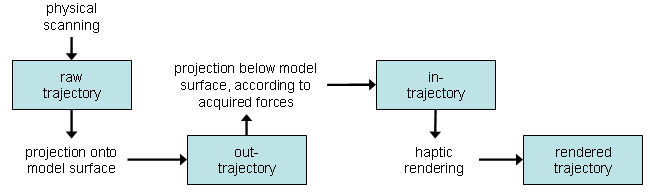

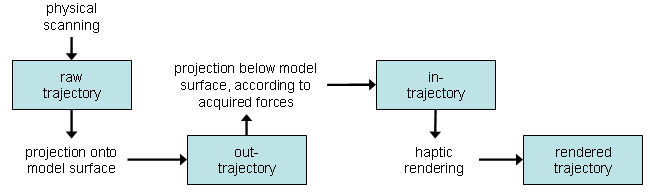

Data Processing Pipeline

Physical models are scanned and used for analysis of haptic rendering

techniques according to the following pipeline; we use the term

trajectory to refer to a stream of positions and forces.

- Scanning: The HAVEN at Rutgers

University is used to scan several aspects of a physical model. First, the

object is scanned with a laser range scanner to obtain a precise polygonal

model of the surface. An optically-tracked force sensor is then

scanned manually across the surface of the object, providing position

and orientation information that is time-referenced to force and torque

data. We call this recorded trajectory a raw trajectory.

- Out-trajectory generation: We can determine when our scanning

probe was in contact with the object using normal force informaiton.

Whenever the probe was in contact with the object, the reported position data

should contain points that lie on the surface of the object. However,

due to small amounts of noise in our measurement, the recorded path

deviates slightly from the surface of the object. We therefore project this

trajectory onto the surface of the object whenever the force data confirms

that the probe was in contact with the object. We call this slightly-

modified trajectory an out-trajectory.

- In-trajectory generation: Haptic rendering systems often depend on

allowing a haptic probe to penetrate the surface of a rigid object before

penalty forces are applied to the haptic device. The data collected from the

physical scan of a rigid object contains normal forces and a trajectory that

lies entirely on the surface of the object. We therefore analytically

compute a trajectory that moves below the surface of the object that - if

passed as input to an ideal haptic rendering algorithm, would apply

the same forces to the haptic device that we measured in our physical scan,

and would graphically present a surface contact point that corresponds to the

trajectory we measured in our physical scan. We call this hypothetical

trajectory an in-trajectory.

- Haptic rendering: This in-trajectory can then be fed to any haptic

rendering algorithm, in place of the position data that would typically come

from a haptic device. The haptic rendering algorithm will generate a stream

of forces (which would typically be applied to a haptic device) and -

generally - a surface contact position (which would typically be rendered

graphically). We call this trajectory a rendered trajectory.

Comparing our rendered trajectory to our raw trajectory

provides a quantitative basis for evaluating a haptic rendering

technique. This page will soon include quantitative results comparing various rendering algorithms according to the metrics presented in our paper.

This pipeline is summarized in the following diagram:

Data Formats

This section describes the data formats in which all files on this page are

presented.

- Mesh files, representing scanned object geometry, will be presented in

.obj format. Meshes will be in the same reference frame as the corresponding data files.

- Trajectory files are presented as .traj files, tab-delimited

ASCII files containing position and force data, plus associated

metadata.

The first section of a .traj file contains comments and metadata; each line

begins with '#' and may contain one metadatafield, of the format:

[field name]=[field value]

A field value extends from the '=' to the end of the line, and is generally

a single number but may be text for some fields. The metadata portion

of the file is terminated with the token DATA_START; all subsequent lines

contain trajectory data.

A typical header looks like:

# points=31800

# columns=8

# column_labels=time(s) X(mm) Y(mm) Z(mm) Fx(N) Fy(N) Fz(N) ctime(s)

# DATA_START

This indicates that the field contains 31800 data points organized in 8

columns, labeled according to the field 'column_labels'. Other fields

may appear in a data file to indicate particular parameters that were

used to generate that file.

The data portion of the file is tab-delimited and formatted according to:

[time] [x] [y] [z] [Fx] [Fy] [Fz] [computation time] [...optional orientation fields...] [...optional torque fields...]

The "time offset" metadata field is for interal debugging only; it is not meaningful to other users of this data.

The "computation time" data field is meaningful only for computational

results; it indicates how much time was spent computing this

particular data point (i.e. it excludes time related to disk i/o, graphic

rendering, etc.).

Data Sets

This section contains several data sets acquired with our scanner and

processed with the pipeline described above. Data is specified in the

.traj format defined above. Note that the forces in an "in-trajectory"

file are defined to be zero, and the normal forces in an "out-trajectory"

file are defined to be one or zero, representing contact or non-contact

with the object surface.

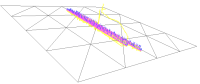

- plane0.zip (400KB) contains the trajectory files describe above, for a simple plane. The following files are included:

- probe.traj: the raw trajectory

- out.traj: the out-trajectory

- in.traj: the in-trajectory, computed using a stiffness of .5N/mm

- proxy.traj: the rendered trajectory, computed using the proxy

algorithm as implemented in CHAI 1.25

- potential.traj: the rendered trajectory, computed using a

potential-field algorithm that applies a penalty force to the nearest

surface point at each iteration

- plane_4.obj: a mesh representing the plane

|

|

Code

This section contains utility code used to process our data formats, and will

later be updated to include example analyses. Also see CHAI 3D for the haptic and graphic rendering

code used for our analyses.

- read_traj_file.m, a Matlab function for

reading the contents of a .traj file, including all data and metadata.

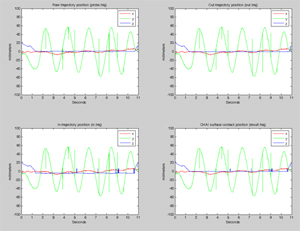

- plot_trajectories.m, a Matlab script for

graphing the positions and forces presented in a series of .traj files. Uses

the previous function (read_traj_file) for file input.

The output of this function, when run on the plane data set provided above, looks like this (click for a larger image):

Example Analyses

This section contains several analyses performed using the above data. The

results presented here serve as reference data for comparison to other

algorithms/implementations, and the approaches presented here provide

examples of potential applications for this repository.

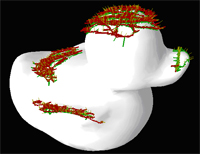

1. Friction Identification

The duck model and trajectories provided above were

used to find the optimal (most realistic) coefficient of dynamic friction

for haptic rendering of this object. This analysis uses the friction

cone algorithm available in CHAI 3D

(version 1.31). The in-trajectory derived from the physical-scanned

(raw) trajectory is fed to CHAI for rendering, and the resulting forces

are compared to the physically-scanned forces. The coefficient of dynamic

friction is iteratively adjusted until a minimum error between the physical

and rendered forces is achieved.

This analysis demonstrates the use of our repository for improving the

realism of a haptic rendering system, and also quantifies the value of

using friction in a haptic simulation, in terms of

mean-squared-force-error.

Results for the no-friction and optimized-friction cases follow, including

the relative computational cost in floating-point operations:

| dynamic friction radius (mm) | RMS force

error (N) | Floating-point Ops |

| 0.00000 (disabled) | 0.132030 | 10.36M |

| 0.300800 | 0.0673061 | 10.77M |

Friction identification: summary of results

We observe that the trajectory computed with friction enabled contains

significantly lower force-vector-error than the no-friction trajectory,

indicating a more realistic rendering, with a negligible increase

in floating-point computation.

- duck_friction_example.zip (400KB)

contains all the files used for this analysis:

- raw-Back.traj: the raw trajectory (a subset of the above duck trajectory, collected on the "back" of the duck)

- out-Back-3.traj: the out-trajectory

- in-Back-3.traj: the in-trajectory, computed using a stiffness of .5N/mm

- proxy-Back-3-friction.traj: the rendered trajectory, computed using the proxy

algorithm as implemented in CHAI 1.31 and

a dynamic friction radius of 0.3008mm (the optimized value obtained from the

iterative process described above)

- proxy-Back-3-flat.traj: the rendered trajectory, computed using

the same proxy but no friction

- duck001_3k.obj: a mesh representing the duck, reduced to 3000

polygons for faster computation (used for all of these trajectories)

2. Comparison Between Proxy (god-object) and Voxel-Based Haptic Rendering

The duck model and trajectories provided above were

used to compare the relative force errors produced by proxy (god-object)

and voxel-based haptic rendering algorithms for a particular trajectory, and

to assess the impact of voxel resolution on the accuracy of voxel-based

rendering. This analysis does not include any cases in which the proxy

provides geometric correctness that the voxel-based rendering could not;

i.e. the virtual haptic probe never "pops through" the model.

Voxel-based rendering was performed by creating a fixed voxel grid and

computing the nearest triangle to each voxel center. The stored triangle

positions and surface normals are used to render forces for each voxel

through which the probe passes.

Results for the proxy algorithm and for the voxel-based algorithm (at two

resolutions) follow, including the computational cost in

floating-point operations, the initialization time in seconds (on a 1.5GHz

Pentium), and the memory overhead:

| Algorithm | Voxel Resolution |

RMS Force Error (N) | Floating-point

Ops | Init Time (s) | Memory |

| voxel | 323 | .136 | 484K | 0.27 | 1MB |

| voxel | 643 | .130 | 486K | 2.15 | 8MB |

| proxy | | .129 | 10.38M | 0.00 | 0 |

Proxy vs. voxel comparison: summary of results

We observe that the voxel-based approach offers comparable force error and

significantly reduced online computation, at the cost of significant

preprocessing time and memory overhead, relative to the proxy (god-object)

approach. Analysis of this particular trajectory does not capture the

fact that the proxy-based approach offers geometric correctness

in many cases where the voxel-based approach would break down.

- duck_voxel_example.zip (400KB)

contains all the files used for this analysis:

- raw-Back.traj: the raw trajectory (a subset of the above duck trajectory, collected on the "back" of the duck)

- out-Back-3.traj: the out-trajectory

- in-Back-3.traj: the in-trajectory, computed using a stiffness of .5N/mm

- proxy-Back-3-flat.traj: the rendered trajectory, computed using the proxy

algorithm

- voxel32-Back-3.traj: the rendered trajectory, computed using the

voxel-based algorithm with 323 voxels

- voxel64-Back-3.traj: the rendered trajectory, computed using the

voxel-based algorithm with 643 voxels

- duck001_3k.obj: a mesh representing the duck, reduced to 3000

polygons for faster computation (used for all of these trajectories)

3. Quantification of the Impact of Force Shading on Haptic Rendering

The duck model and trajectories provided above were

used to quantify the impact of force shading on the accuracy of haptic

rendering of a smooth, curved object. Force shading uses interpolated

surface normals to determine the direction of feedback within a surface

primitive, and is the haptic equivalent of Gouraud shading. Friction

confounds the effects of force shading, so the forces contained in each

out-trajectory were projected onto the surface normal of the

"true" (maximum-resolution) mesh before performing these evaluations.

Results are presented for several polygon-reduced versions of the duck model,

including the average compute time per haptic sample (on a 3GHz P4) and the

total computational cost in floating-point operations (for the complete

trajectory). We also indicate the percentage reduction in RMS force error

due to force shading.

| Model Size (kTri) | Shading Enabled | Mean

Compute Time (us) | Floating-point

Ops | RMS Force Error (N) | Error Reduction (%) |

| 0.2 | false | 10.1725 | 9.7136M | 0.0852431 | |

| 0.2 | true | 15.2588 | 12.176M | 0.0845509 | 0.81 |

| 0.5 | false | 10.1725 | 10.361M | 0.0305367 | |

| 0.5 | true | 15.2588 | 12.961M | 0.0230916 | 24.3 |

| 1 | false | 10.1725 | 9.7921M | 0.0310648 | |

| 1 | true | 15.2588 | 12.383M | 0.0230333 | 25.9 |

| 3 | false | 10.1725 | 10.380M | 0.0223957 | |

| 3 | true | 15.2588 | 12.744M | 0.0143149 | 36.0 |

| 6 | false | 10.1725 | 10.560M | 0.0164838 | |

| 6 | true | 15.2588 | 13.244M | 0.0125079 | 24.1 |

| 9 | false | 10.1725 | 10.644M | 0.0154634 | |

| 9 | true | 15.2588 | 13.476M | 0.0121254 | 21.6 |

| 64 | false | 10.1725 | 10.064M | 0.0129949 | |

| 64 | true | 15.2588 | 13.174M | 0.0111003 | 14.6 |

| 140 | false | 10.1725 | 9.2452M | 0.00859607 | |

| 140 | true | 15.2588 | 11.870M | 0.00891852 | -3.75 |

Force shading: summary of results

We observe that force shading results in a significant reduction in RMS force

error - as much at 36% - for models of typical size, at some cost

(approximately 20%) in floating-point operations). For models at the

maximum available resolution, force shading is not expected to contribute

significantly to rendering quality, and indeed we see the error reduction

fall off as meshes get very large. For models that are reduced to only

several hundred polygons, the geometry no longer effectively captures the shape

of the original mesh, and we see a falloff in error reduction as the quality

of rendering decreases.

- duck_shading_example.zip (7MB)

contains all the files used for this analysis:

- probe-Back.traj: the raw trajectory (a subset of the above duck trajectory, collected on the "back" of the duck)

- out-Back-projected-*.traj: the out-trajectory for each of the

polygon-reduced versions of the duck

- in-Back-projected-*.traj: the in-trajectory for each of the

polygon-reduced versions of the duck

- proxy-Back-projected-*-flat.traj: the rendered trajectory for each of the

polygon-reduced versions of the duck, with shading disabled

- proxy-Back-projected-*-shade.traj: the rendered trajectory for each of the

polygon-reduced versions of the duck, with shading ensabled

- duck001_*.obj: a mesh file for each of the polygon-reduced

versions of the duck model

User Contributions

We hope to significantly expand the data sets available here over the coming

months, and we welcome any of the following from users of this

repository:

- Additional analyses performed using these data sets to evaluate specific

haptic rendering algorithms or implementations

- Additional metrics that we can use to evaluate haptic trajectories (in

place of our mean-squared-force-error metric), ideally (but not necessarily) implemented in Matlab

- Additional rendering algorithms, ideally (but not necessarily) implemented as subclasses of CHAI's

proxy, on which we can run evaluations using our trajectories

- Additional data that you may be able to collect from a similar scanning

apparatus

Contact

Please email us with any

contributions, questions, or to discuss the data presented here. We welcome any

information you have about how you're using this data or how it could be made

more useful.

Written and maintained by Dan

Morris

Support for this work was provided by NIH grant LM07295, the AO Foundation,

and NSF grants IIS-0308157, EIA-0215887, ACI-0205671, and EIA-0321057.

Any opinions, findings, and conclusions or recommendations expressed in

this material are those of the author(s) and do not necessarily reflect

the views of the National Science Foundation.