Best of both worlds:

human-machine collaboration for object annotation

Abstract

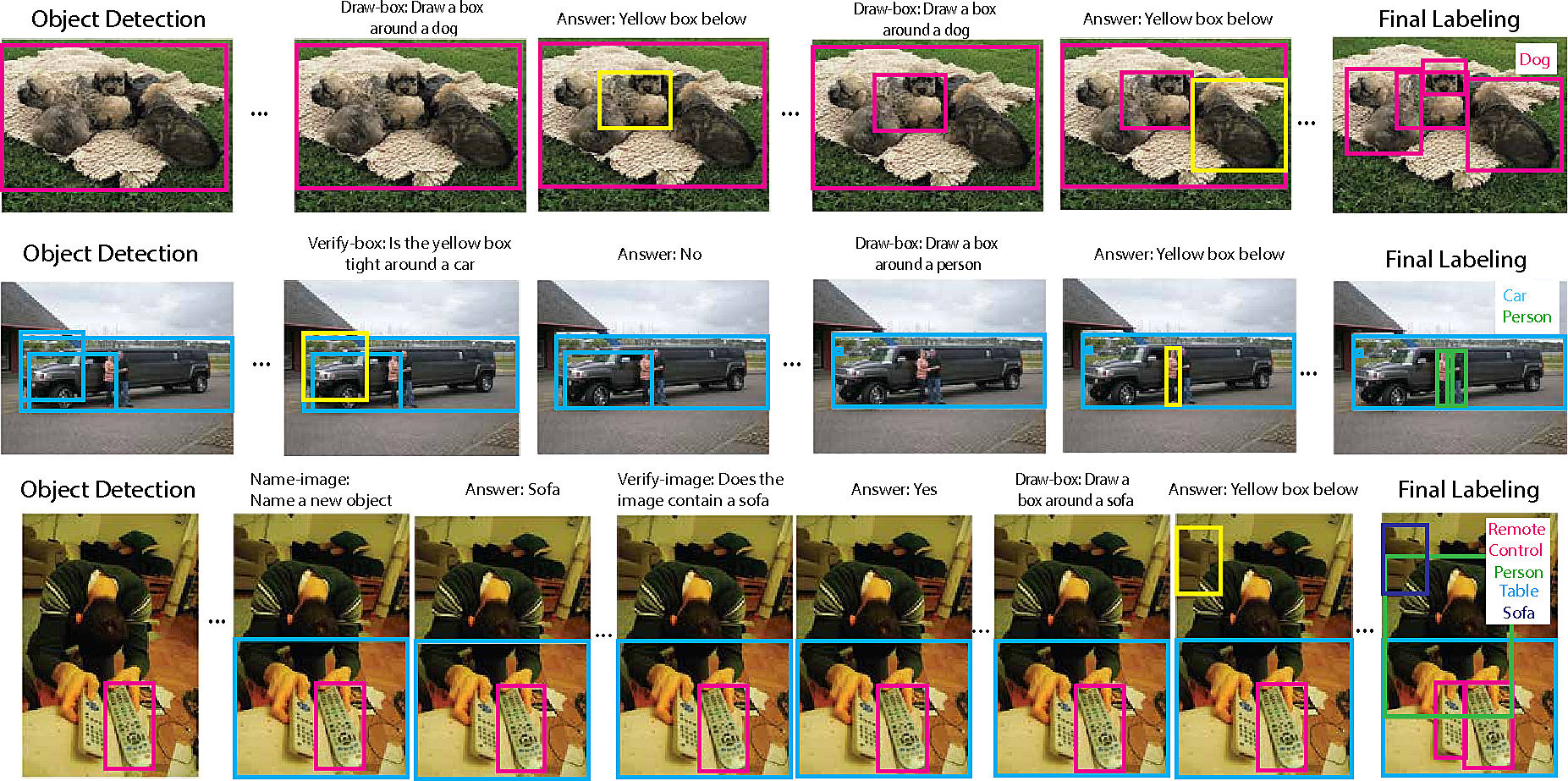

The long-standing goal of localizing every object in

an image remains elusive. Manually annotating objects

is quite expensive despite crowd engineering innovations.

Current state-of-the-art automatic object detectors can accurately

detect at most a few objects per image. This paper

brings together the latest advancements in object detection

and in crowd engineering into a principled framework

for accurately and efficiently localizing objects in images.

The input to the system is an image to annotate and a set

of annotation constraints: desired precision, utility and/or

human cost of the labeling. The output is a set of object

annotations, informed by human feedback and computer vision.

Our model seamlessly integrates multiple computer

vision models with multiple sources of human input in a

Markov Decision Process. We empirically validate the effectiveness

of our human-in-the-loop labeling approach on

the ILSVRC2014 object detection dataset.

Citation

@inproceedings{RussakovskyCVPR15,

author = {Olga Russakovsky and Li-Jia Li and Li Fei-Fei},

title = {Best of both worlds: human-machine collaboration for object annotation},

booktitle = {CVPR},

year = {2015}

}

Links

Qualitative results