In this project, we applied Associative Markov Networks to the the task of terrain classification. Terrain classification is very useful for autonomous mobile robots in real-world environments for path planning, target detection, and as a pre-processing step for other perceptual tasks.

The Stanford Segbot Project has provided us with a laser range maps of the Stanford campus collected by a moving robot equipped with SICK2 laser sensors. The data consists of around 35 million points, represented as 3D coordinates in an absolute frame of reference. Thus, the only available information is the location of points.

Here is a snapshot of an overhead view of the Stanford Quad:

Our goal will be to classify the laser range points into four classes: ground, building, tree, and shrubbery. Since classifying ground points is trivial given their absolute z-coordinate, we classify ground points deterministically by thresholding the z coordinate at a value close to 0. After we do that, we are left with approximately 20 million non-ground points.

Recall that each point is represented simply as a location in an absolute 3D coordinate system. That's why the features we use require pre-processing to infer properties of the local neighborhood of a point, such as how planar it is, or how much of the neighbors are close to the ground. The features described below are used in both flat and joint classification.

The features we use should be invariant to rotation in the x-y plane, as well as the density of the range scan, since scans tend to be sparser in regions farther from the robot.

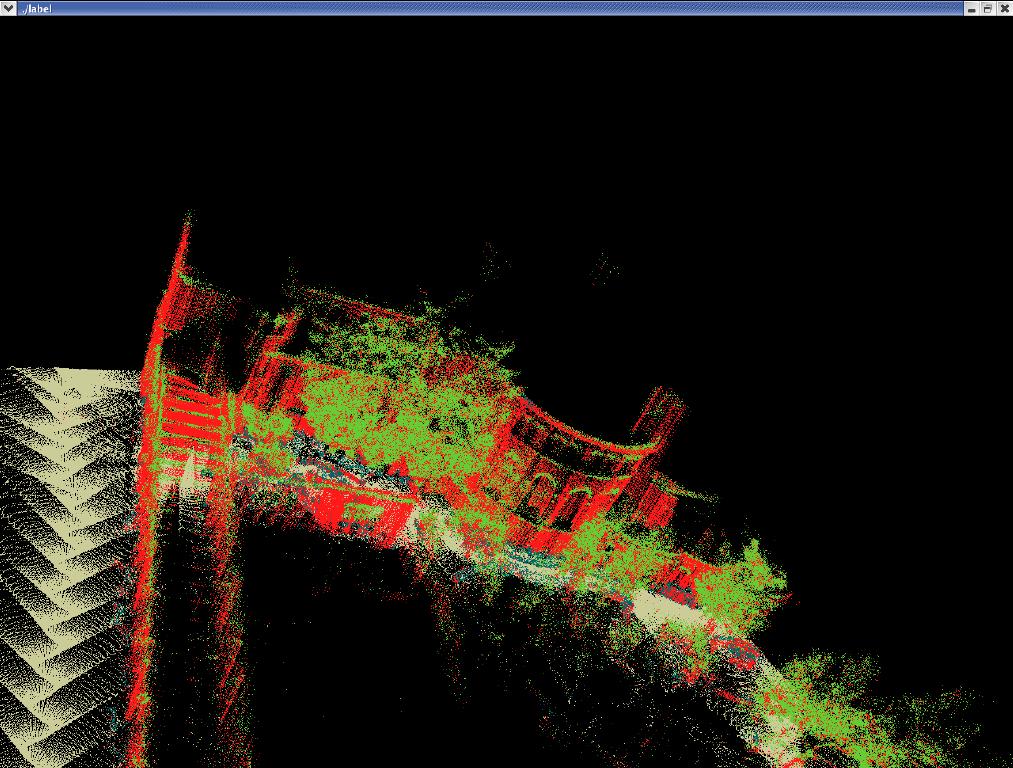

We can see that the flat model achieves reasonable performance in a lot of places, but fails to enforce local consistency of the classification predictions. For example arches on buildings and other less planar regions are consistently confused for trees, even though they are surrounded entirely by buildings.

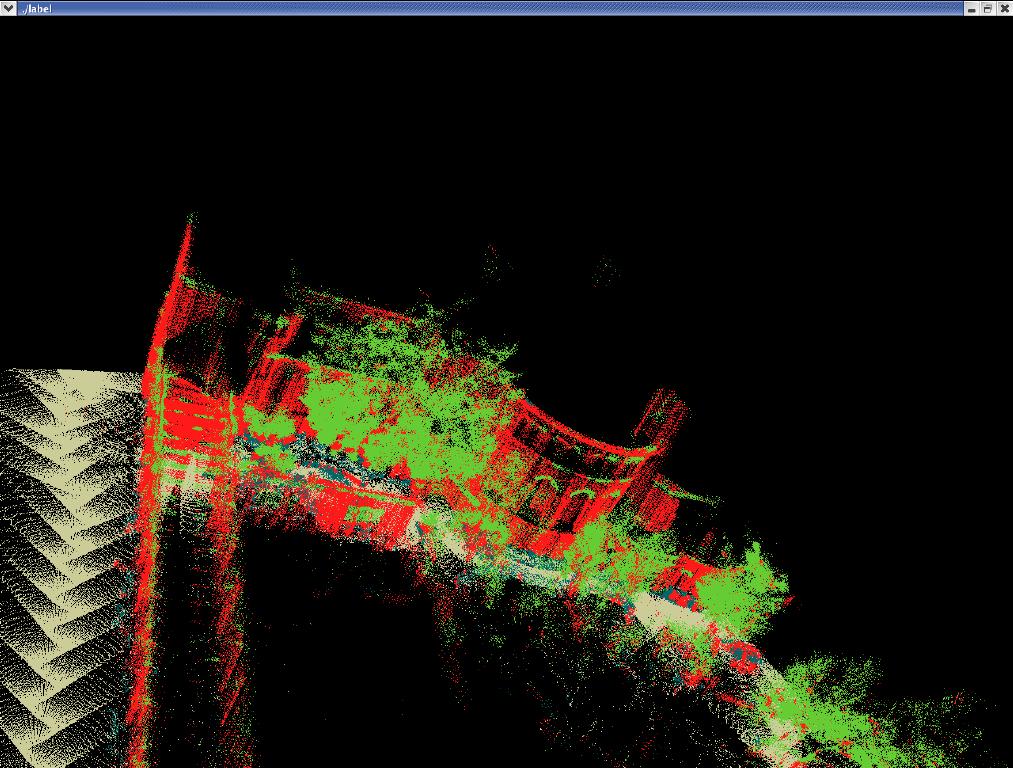

We can see that the voted model performs slightly better than flat: for example, it smooths out trees and some areas of the buildings. Still it fails in areas like arches of buildings where the flat classifier has a locally consistent wrong prediction.

For inference, we used mincut combined with the alpha-expansion algorithm proposed by Boykov, et. al. (Y. Boykov, O. Veksler, R. Zabih, "Fast Approximate Energy Minimization via Graph Cuts"). The ideas used in posing MAP-inference as a graph-cut problem are similar to the ones in "What Energy Functions can be Minimized via Graph Cuts?" Kolmogorov and Zabih. We also used Kolmogorov's modified mincut algorithm (using two trees to search for augmented paths).

We split up the dataset into 16 square (in the xy-plane) regions, the largest of which contains around 3.5 million points. The implementation is largely dominated by I/O time, with the actual mincut taking less than a minute even for the largest segment.

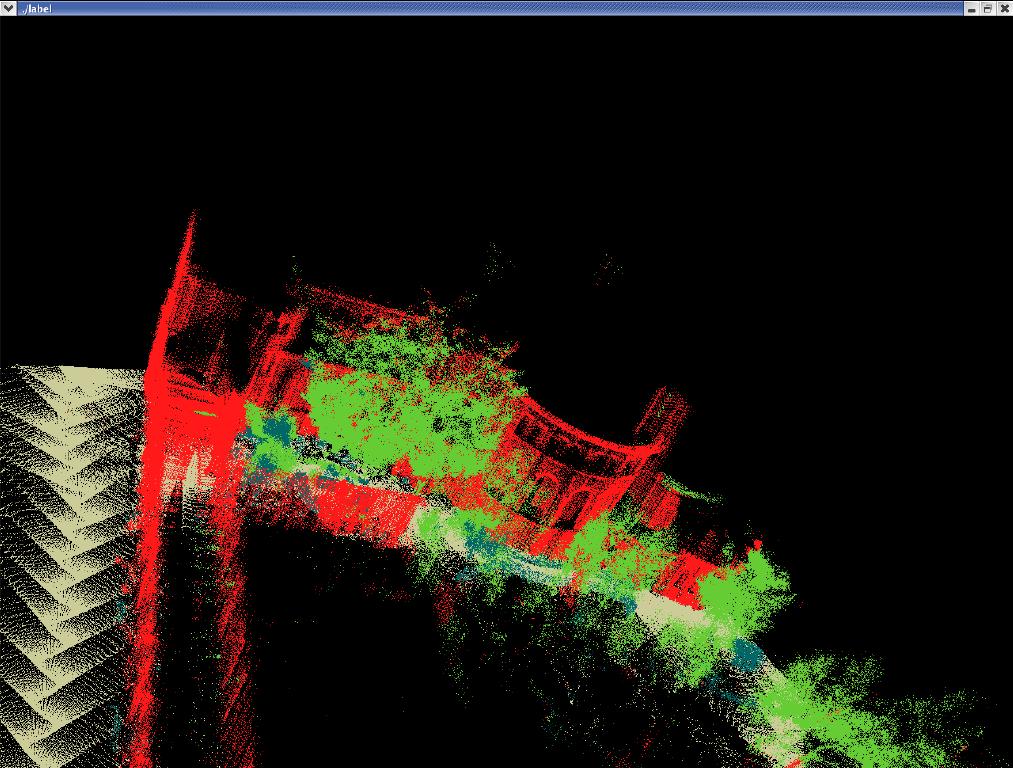

We can see that the predictions are much smoother: for example

building arches and tree trunks are predicted correctly. Here is

another example:

Here is a VRML fly-through animation of the same results: