Monday, April 7, 2008

Sunday, April 6, 2008

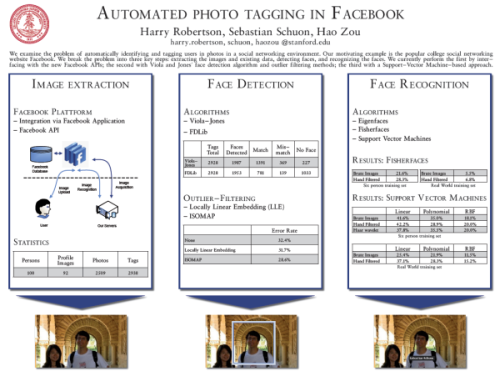

Automated Photo Tagging in Facebook

The web 2.0 community Facebook offers among others, the possibility to upload images to photo albums. On these pictures persons can be tagged, meaning their position in the image is marked. Currently users have to do this manually, which is a cumbersome task.

The web 2.0 community Facebook offers among others, the possibility to upload images to photo albums. On these pictures persons can be tagged, meaning their position in the image is marked. Currently users have to do this manually, which is a cumbersome task.We looked into, whether this task could be performed by a computer. The presented automatic facial tagging system is split into three subsystems: obtaining image data from Facebook, detecting faces in the images and recognizing the faces to match faces to individuals. Firstly, image data is extracted from Facebook by interfacing with the Facebook API. Secondly, the Viola-Jones’ algorithm is used for locating and detecting faces in the obtained images. Furthermore an attempt to filter false positives within the face set is made using LLE and Isomap. Finally, facial recognition (using Fisherfaces and SVM) is performedon the resulting face set. This allows us to match faces to people, and therefore tag users on images in Facebook. The proposed system accomplishes a recognition accuracy of close to 40%, hence rendering such systems feasible for real world usage.

Download project report (with Harry Robertson, Hao Zou)

Cite as:

@ARTICLE{schuon_fb07,

title={Automated Photo Tagging in Facebook},

author={Schuon, Sebastian and Robertson, Harry and Zou, Hao},

journal={Stanford CS229 Fall 2007 Project Report},

year={2007}}

Saturday, April 5, 2008

Head Motion Controlled Break Out

In an attempt to create new interfaces for computers, I experimented with webcams (as part of Designing Applications that See). In this instance, the paddle of the classic break out game can be moved by simply moving your head. This creates an astonishing simple approach in controlling the game.

In an attempt to create new interfaces for computers, I experimented with webcams (as part of Designing Applications that See). In this instance, the paddle of the classic break out game can be moved by simply moving your head. This creates an astonishing simple approach in controlling the game.One can think of several techniques to track the head of the user, but I found a very simple one to work fast (realtime and low cpu usage is a huge concern for a game) and reliable: simply thresholding the image for bright pixels and then averaging their position to a mean position, assumed to be the head. This position might by no means be really the head, since other bright objects might be in the view of the camera. But these are normally static, hence the only way to change the mean position is by moving the (illuminated) head. The amount the paddle is moved by a certain head movement might be different from background to background, but the human can adapt to that intuitively.

To see the technique in action, see this video:

Machine Learning: Locally Linear Embedding

This work goes into the field of machine learning. So far most learning techniques needed supervision and used linear models to represent the data. The learning algorithm evaluated in this work is unsupervised and of non-linear nature. It was first proposed in 2000, but has so far only been evaluated by computer science and mathematics researchers. During my work I focused on some implementation issues important to engineers. Furthermore some new incremental formulations were proposed.

This work goes into the field of machine learning. So far most learning techniques needed supervision and used linear models to represent the data. The learning algorithm evaluated in this work is unsupervised and of non-linear nature. It was first proposed in 2000, but has so far only been evaluated by computer science and mathematics researchers. During my work I focused on some implementation issues important to engineers. Furthermore some new incremental formulations were proposed. Download Report (Bachelor Thesis)

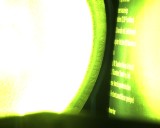

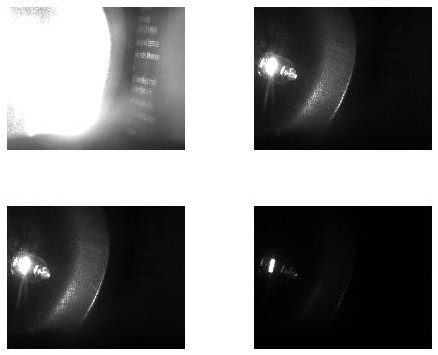

HDR-Imaging for Welding

Original Scene |

|

The image on the left shows the scene captured by a standart camera. Here the scene comprimises both a bright light source and some text on the right. Using the suggested HDR technique, we can craft an image which contains both the detailed structure of filament of the light source and the text in readable form. Below you can see the four images channels of the raw bayer image:

For other details on the project, see the Visible Welding Homepage for details.

Motion Deblurring

If a camera moves fast while taking a picture, motion blur is induced. There exist techniques to prevent this effect to occur, such as moving the lens system or the CCD chip electro-mechanically. Another approach is to remove the motion blur after the images have been taken, using signal processing algorithms as post-processing techniques. For more than 30 years, numerous researchers have developed theories and algorithms for this purpose, which work quite well when applied to artificially blurred images. If one attempts to use those techniques to real world scenarios, they mostly fail miserably. In order to study why the known algorithms have problems to de-blur naturally blurred images we have built an experimental setup, which produces real blurred images with defined parameters in a controlled environment.

If a camera moves fast while taking a picture, motion blur is induced. There exist techniques to prevent this effect to occur, such as moving the lens system or the CCD chip electro-mechanically. Another approach is to remove the motion blur after the images have been taken, using signal processing algorithms as post-processing techniques. For more than 30 years, numerous researchers have developed theories and algorithms for this purpose, which work quite well when applied to artificially blurred images. If one attempts to use those techniques to real world scenarios, they mostly fail miserably. In order to study why the known algorithms have problems to de-blur naturally blurred images we have built an experimental setup, which produces real blurred images with defined parameters in a controlled environment. For more details visit the separate Motion Deblurring Page.

Cite as:

@ARTICLE{schuon_deblur06,

title={Comparison of Motion Deblur Algorithms and Real World Deployment},

author={Schuon, Sebastian and Diepold, Klaus},

journal={57th International Astronautical Congress},

year={2006}}

MOKE: Images of Micromagnets

A way to illustrate the magnetic field on surfeces, the Magneto-Optic Kerr Effect can be used. During my time at the Max-Plank-Institute for Microstructure Physics, Halle, I established an experiment to record movies of the domain structure magnitisation of certain material.

A way to illustrate the magnetic field on surfeces, the Magneto-Optic Kerr Effect can be used. During my time at the Max-Plank-Institute for Microstructure Physics, Halle, I established an experiment to record movies of the domain structure magnitisation of certain material. Whisker

The first video clip shows the process of establishing domain structures by exposing the target to a time-variant magnetic field. Here the target was a Whisker. The lumminance of the target corresponds directly to the magntic field strength. The images captured have been overlayed in software by vectors of the magnetic flux direction.

CU-Sheet

In this second clip the target was a cupper sheet. Contrary to the Whisker we observe a different domain pattern (a stacked one compared to the Landau-Lifshitz structure before). Furthermore defects in the target material lead to earlier domain forming.

The Project Report and a Poster are unfortunatly available in German only.

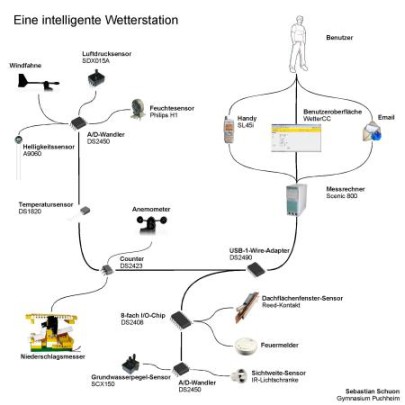

Intelligent Weather Station

A legacy thing, back from highschool. Since that was in Germany, the docs are also German...

Abstract:

"Als Ziel habe ich mir eine Wetterstation gesetzt, welche selbstständig arbeitet und sämtliche Messwerte digital erfasst. Alle Sensoren sollen mittels eine 1-Wire-Netzes untereinander vernetzt werden, damit ein Computer die Messwerte verarbeiten kann. Anhand dieser Werte soll die Software eigenständig Entscheidungen treffen und gegebenenfalls Warnmeldungen ausgeben.

Das Ergebnis dieser Überlegungen ist eine Wetterstation mit Feuchte-, Niederschlags-, Temperatur-, Luftdruck-, Windgeschwindigkeits-, Windrichtungs- und Sichtweitensensoren. In Arbeit befinden sich im Moment weitere Sensoren zur Erfassung des Grundwasserpegels, der Zustände der Feuermelder und der Dachflächenfenster. Eine Kombination der Sensordaten ermöglicht der Software Warnmeldungen an den Benutzer („Es regnet, bitte Fenster schließen“ oder „Vorsichtshalber Pumpen im Keller installieren, Grundwasserspiegel ist gefährlich hoch“). Diese Meldungen können entweder per Internet oder SMS zum Benutzer gelangen. Außerdem bietet die Software ein Web-Interface zur Auswertung aller Sensordaten. Ebenfalls können die Sensordaten in Standardformaten exportiert werden um z.B. Klimadiagramme zu erstellen."

Download Report (German only)

|  |  |