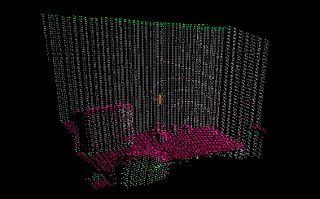

3d Point Cloud (120 deg. fov) |

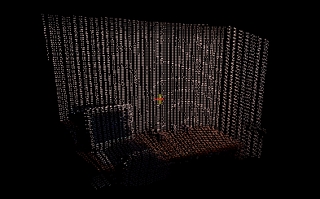

3d Point Cloud (color projection) |

3d Point Cloud (reconstructed) |

This project involves augmenting visual cues from images (or video streams) with 3d range data (e.g. from a laser) for improving object recognition and holistic scene understanding in real world environments (and at real-time data rates). We use an MRF to reconstruct a dense 3d point cloud from sparse laser data. We then extract visual and spatial cues from the reconstructed point cloud and use them for improved object detection. A model using geometry extracted from the point cloud allows for high-level scene understanding.

demonstration

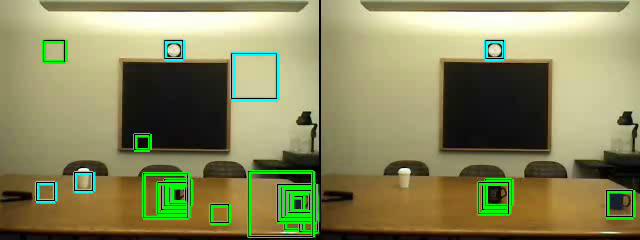

The following video shows results comparing vision only object

recognition (left panel) with object recognition incorporating

3d scene understanding (right panel)

for two common office/household objects: mugs (green) and wall

clocks (cyan).

Data was collected using the STAIR Robot.

The superiority of the 3d scene understanding results is evident.

avi (6.8MB) |

mpeg (10.6MB) |

wmv (1.7MB)

poster

View the poster from our NIPS 2007 demonstration.